Calibration means the comparison and adjustment (if necessary) of an instrument’s response to a stimulus of precisely known quantity, to ensure operational accuracy. In order to perform a calibration, one must be reasonably sure that the physical quantity used to stimulate the instrument is accurate in itself.

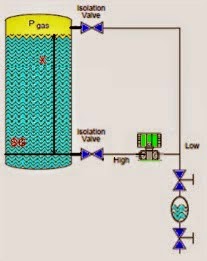

For example, if I try calibrating a pressure gauge to read accurately at an applied pressure of 200 PSI, I must be reasonably sure that the pressure I am using to stimulate the gauge is actually 200 PSI. If it is not 200 PSI, then all I am doing is adjusting the pressure gauge to register 200 PSI when in fact it is sensing something different.

Ultimately, this is a philosophical question of epistemology: how do we know what is true? There are no easy answers here, but teams of scientists and engineers known as metrologists devote their professional lives to the study of calibration standards to ensure we have access to the best approximation of “truth” for our calibration purposes. Metrology is the science of measurement, and the central repository of expertise on this science within the United States of America is the National Institute of Standards and Technology, or the NIST (formerly known as the National Bureau of Standards, or NBS).

Experts at the NIST work to ensure we have means of tracing measurement accuracy back to intrinsic standards, which are quantities inherently fixed (as far as anyone knows). The vibrational frequency of an isolated cesium atom when stimulated by radio energy, for example, is an intrinsic standard used for the measurement of time (forming the basis of the so-called atomic clock). So far as anyone knows, this frequency is fixed in nature and cannot vary: each and every isolated cesium atom has the exact same resonant frequency. The distance traveled in a vacuum by 1650763.73 wavelengths of light emitted by an excited krypton-86 (86Kr) atom is the intrinsic standard for one meter of length. Again, so far as anyone knows, this distance is fixed in nature and cannot vary. This means any suitably equipped laboratory in the world should be able to build their own intrinsic standards to reproduce the exact same quantities based on the same (universal) physical constants. The accuracy of an intrinsic standard is ultimately a function of nature rather than a characteristic of the device. Intrinsic standards therefore serve as absolute references which we may calibrate certain instruments against.

The machinery necessary to replicate intrinsic standards for practical use is quite expensive and usually delicate. This means the average metrologist (let alone the average industrial instrument technician) simply will never have access to one. While the concept of an intrinsic standard is tantalizing in its promise of ultimate accuracy and repeatability, it is simply beyond the reach of most laboratories to maintain.

An example of an intrinsic standard is this Josephson Junction array in the primary metrology lab at the Fluke corporation’s headquarters in Everett, Washington:

A Josephson junction functions as an intrinsic standard for voltage, generating extremely precise DC voltages in response to a DC excitation current and a microwave radiation flux. Josephson junctions are superconducting devices, and as such must be operated in an extremely cold environment, hence the dewar vessel filled with liquid helium in the right-hand side of the photograph. The microwave radiation flux itself must be of a precisely known frequency, as the Josephson voltage varies in direct proportion to this frequency. Thus, the microwave frequency source is synchronized with the NIST’s atomic clock (another intrinsic standard).

While theoretically capable of generating voltages with uncertainties in the low parts per billion range, a Josephson Array such as this one maintained by Fluke is quite an expensive beast, being too impractical for most working labs and shops to justify owning. In order for these intrinsic standards to be useful within the industrial world, we use them to calibrate other instruments, which are then used to calibrate other instruments, and so on until we arrive at the instrument we intend to calibrate for field service in a process. So long as this “chain” of instruments is calibrated against each other regularly enough to ensure good accuracy at the end-point, we may calibrate our field instruments with confidence. The documented confidence is known as NIST traceability: that the accuracy of the field instrument we calibrate is ultimately ensured by a trail of documentation leading to intrinsic standards maintained by the NIST. This proves to anyone interested that the accuracy of our calibrated field instruments is of the highest pedigree.